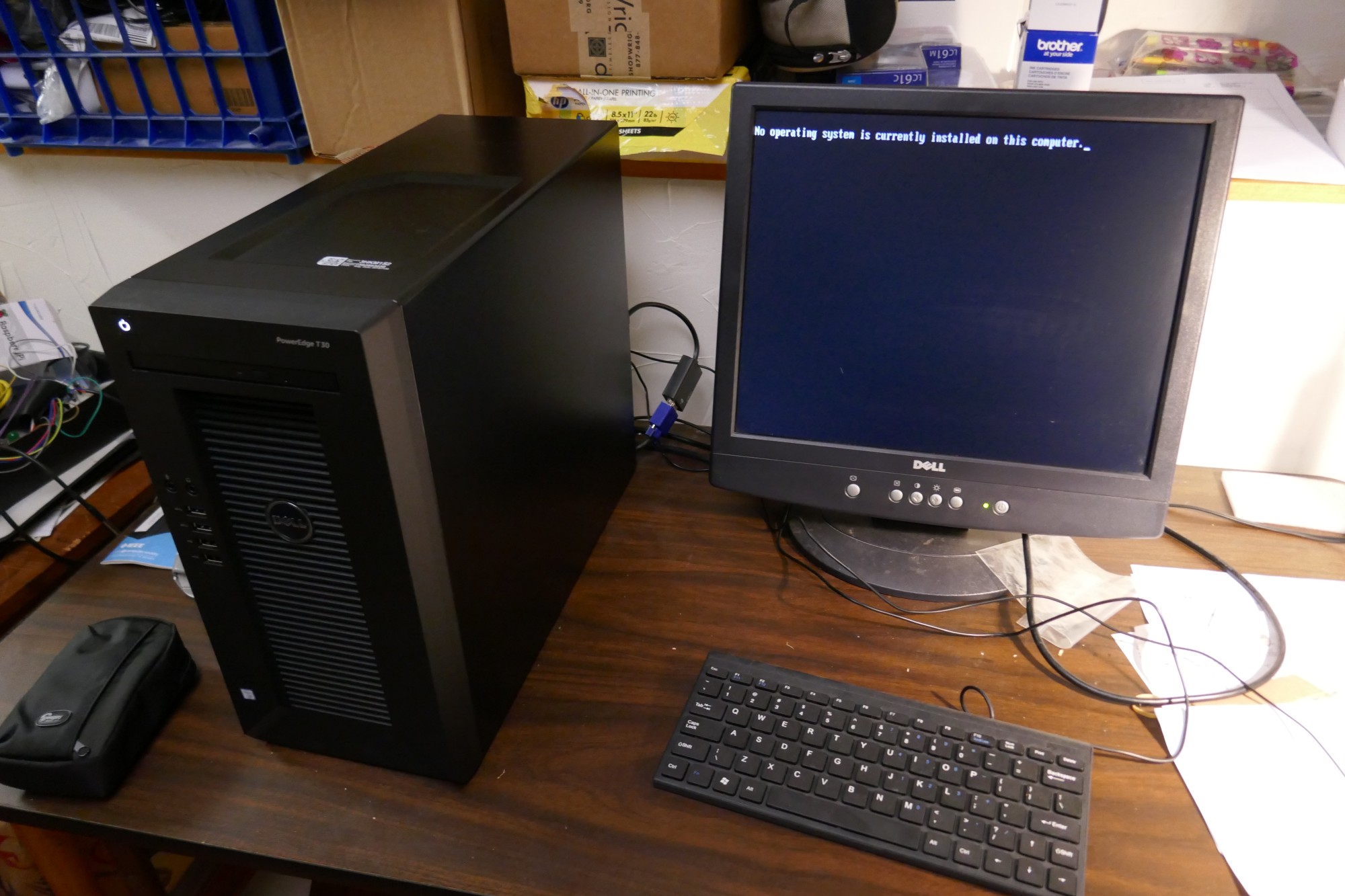

My 2018 Apollo "Desktop Server", or should I say "Server Desktop"?

My 2018 Apollo Desktop

This is one of a series of computer configuration stories, in reverse chronological order. Others are accessible as follows:

Or, return to the home page for this activity.Project Motivation

Support a 4K display for photo editing, on a native Linux platform with Windows to be available within a VM. Provide large and reliable storage.

Approach

So, I recognized that I spend much of my desktop time viewing and editing still photos, many of which are 20 megapixels and above, and that I was doing so on a 1920x1080 (or 2 megapixel) display. Somehow, this seemed wrong. My desktop system dated from 2011, and was midrange at the time. I'd set it for Linux/Windows dual-boot, but (as with many geeks of a "certain age") rarely booted the Windows instance except to run Quicken. I could probably have gotten it to 4K resolution by installing a new graphics card but, hey, it was rather long in the tooth anyway. A project ensued.

I looked at contemporary desktop systems. Many seemed space-constrained and therefore hard to expand, and others had optimizations like Optane memory that didn't seem friendly to dual-boot or Linux configurations. I was happy and glad to do some tinkering, but wanted to be confident that I could achieve a working result that didn't target most of its power at the operating system I wasn't primarily using. I'd had good experience configuring a Dell PowerEdge T30 as a home server that's run almost continuously over the past year and thought it might be just the platform I was looking for. Among other attributes, I've been impressed with its quiet and energy-efficient operation, and had noted that the steps in its configuration Just Worked more smoothly and quickly than I'd thought they might.

I don't do gaming, so expected that the integrated HD P530 graphics support in its Xeon E3-1225v5 CPU (a workstation-class Skylake quad-core processor initially released in 2015, clocked at 3.3 GHz with a Passmark score of 7837) would be sufficient and suitable to drive a 4K display for my purposes. Bare metal Linux, eventually running Windows as needed within a VM, seemed to fit my usage and preferences well. When I saw this very system offered as a Small Business Doorbuster (do Small Businesses actually Bust Doors for sales, one wonders?) at $299, I clicked the requisite buttons to order a second server box and received it a few days later.

Getting it Running

Quite the minimal unpacking for a new computer, whose date stamp indicated that it was freshly manufactured

earlier in the month.

It came without operating system, keyboard, or mouse.

I think that the only other item enclosed besides the tower itself was a power cord.

In short order, I plugged in a spare USB keyboard, mouse, and wireless NIC,

connected an old display panel via a VGA-HDMI adapter, and

installed Ubuntu 18.04

Desktop on the system's 1TB boot disk. Having done this, I was able to download and update the system's BIOS

to its most current version (and thanks, Dell,

for

facilitating BIOS updates from Linux

environments.)

It came without operating system, keyboard, or mouse.

I think that the only other item enclosed besides the tower itself was a power cord.

In short order, I plugged in a spare USB keyboard, mouse, and wireless NIC,

connected an old display panel via a VGA-HDMI adapter, and

installed Ubuntu 18.04

Desktop on the system's 1TB boot disk. Having done this, I was able to download and update the system's BIOS

to its most current version (and thanks, Dell,

for

facilitating BIOS updates from Linux

environments.)

sysbench --threads=4 cpu run indicates about a 1.33x speedup relative to my

immediately-preceding desktop, but I've rarely

been a CPU-bound kind of user anyway.

I'm still learning about the 4K display world and didn't know whether and what cabling would work how well and at what speeds until my shiny new monitor arrived. As of a day later, I'm now editing on the 4K monitor. It looks beautiful at Gnome 200% scaling, using an HDMI cable that I had lying around. I believe it's now running 3840x2160 @ 30 Hz. I went immediately to the 200% setting as 100% yielded windows and icons that were very tiny. Could probably do 150%, but Gnome doesn't now seem to handle that out of its box. [UPDATE: As of Ubuntu 19.04, 150% scaling is now supported. I've switched to using it along with Wayland display management. I'm liking the result and am comfortably viewing denser content on my display, though had to adjust some application display parameters to fit the environment.] Photos look excellent, but I'm not completely sure whether or not I'm actually scaling their data as well and need to look into that further. 4K video samples seem to stream nicely, even with a 72 Mb/sec wireless connection, though those designated as Chromecast HDR (?) sometimes blank intermittently at least with Firefox. Maybe they want Chrome instead?

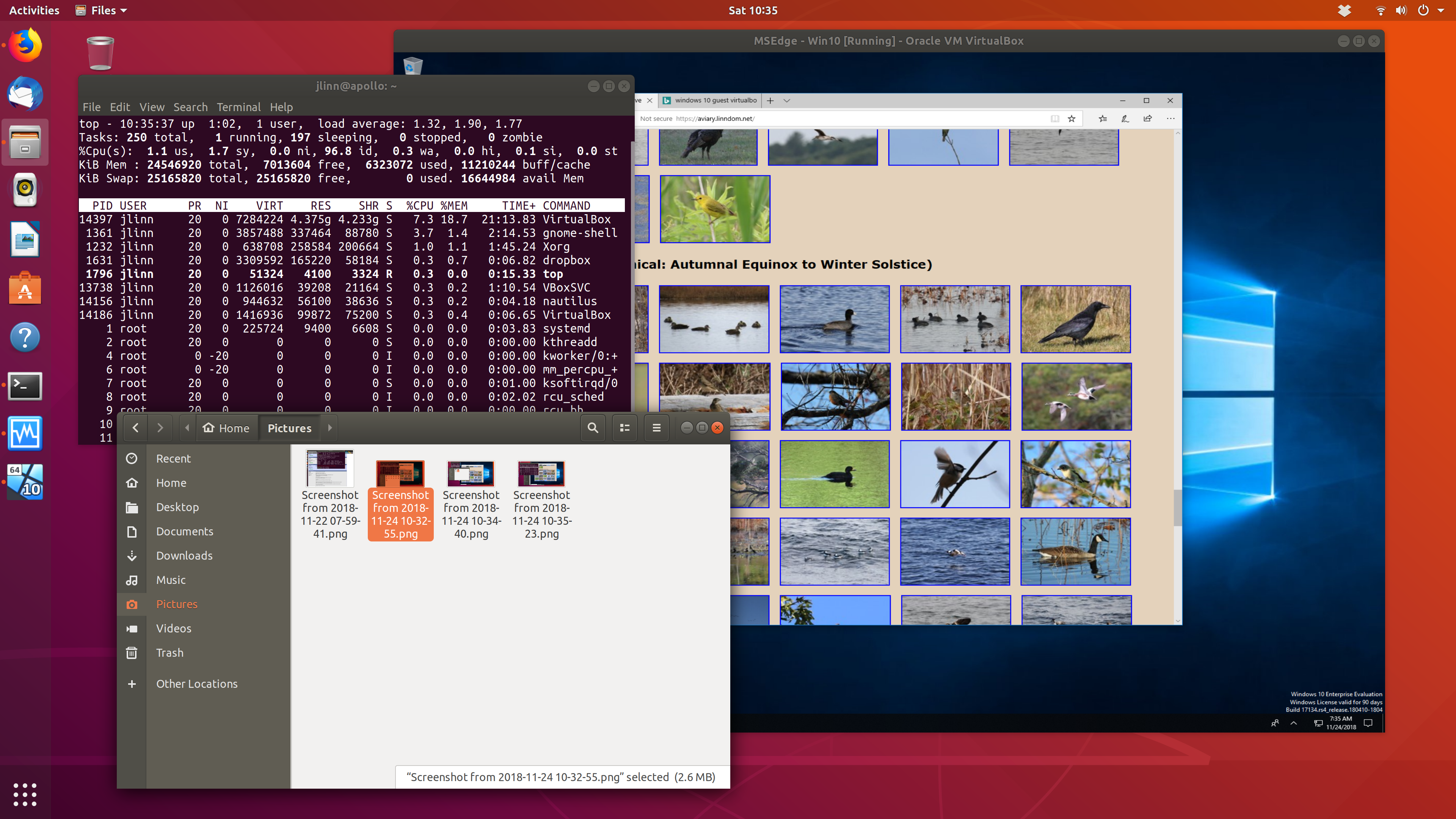

I downloaded an evaluation VirtualBox appliance with Win 10 (thanks, Microsoft!) and was able to get it running.

By doing so, I was able to verify that the shared folder mechanism

can access underlying data stored on an

By doing so, I was able to verify that the shared folder mechanism

can access underlying data stored on an ext4

file system even though Windows wouldn't be able to read it directly.

I've also been pondering directory setup, and decided to configure a RAID1 pair with about

2.5-3 terabytes of space to hold /home. After maybe 30 minutes to install (using

cabling and carriers conveniently included with the server) and partition the drives,

I started the 4-5 hour process that mdadm requires to initialize the array.

If I want to speed up boot time, I could replace the boot disk with a SATA SSD, but this may not really be

necessary. The currently installed 24GB of RAM should be (far!) more than enough to handle VM configurations,

but the as-shipped 8GB might have been too tight. So, main memory shouldn't be a problem. I've

probably overprovisioned the disk and RAM capacity relative to the CPU, but so it is.

After creating the RAID array as discussed above, I merged my home directory from the

prior Linux desktop, remounted, and transferred my photo collection into the resulting /home

partition. The imported Thunderbird profile Just Worked. I split off a new VeraCrypt volume to hold

Quicken data files separately from other encrypted data,

to minimize the likelihood and need for interference that could result

from opening a single volume from both the Ubuntu host and a Windows VM.

Nice to see that the CPU

workloads seem to be distributing effectively among the processor's four cores.

After I started using the system, I noticed that the screen would intermittently blank for a couple of seconds at a time (particularly, but not exclusively, during active editing), and would then resume normal operation. Apparently, this is a common issue when running at 4K resolution with slower cables; per specification, the graphics chipset outputs 3840x2160 @ 30Hz, which was introduced in HDMI 1.4 and is certified for operation only with High Speed cables and above. With a lower-rated cable, the display may blink as noise causes the computer and monitor to repeat their protocol handshake. Maybe my old HDMI cable wasn't quite the right thing after all, even though it usually works. I've now ordered a newest-spec Ultra High Speed cable and will see if that makes the blinks go away. And/or, I can try shifting to use the Display Port cables that came with the monitor. Reconnected with the supplied DP cable and the display's now running at 3840x2160 @ 60 Hz. Haven't yet seen any screen blinking. Maybe I'll soon be receiving a redundant HDMI cable.

After a month or so of operation, I decided to take another hardware plunge, moving the system

disk onto a Samsung 860 EVO SATA SSD. Clonezilla with default options Just Worked, and it reduced

power-up boot time from 1:43 to 1:24 to reach the Ubuntu greeter. (Some of this startup time may derive

from the Intel BIOS menu that I see after about 0:17 and try to ignore as it asks me to configure motherboard RAID,

but it doesn't appear that this can be shut off.) Looking into the matter further,

I adjusted

initramfs to match my current swap file's UUID, and boot time is now at about 0:55;

Ubuntu might be able to boot somewhat faster in UEFI than in the current legacy mode, but I'm

disinclined to go down that reinstallation path and risk destabilizing my smoothly-running system.

systemd-analyze time just told me that

Startup finished in 2.880s (kernel) + 8.368s (userspace) = 11.248s

graphical.target reached after 7.907s in userspace, so much of the near-minute

may be outside OS control anyway. VM speedup is dramatic: start time for my Windows VM went

from 0:58 to 0:14 once I moved the .vdi file onto the SSD. Application startup (in both

Linux and Windows) seems snappier as well, but I lack an easily comparable means to quantify that.

Pros and cons of this non-traditional approach

Pros and cons

Good things

- Reliability features like RAM parity and space for a RAID disk pair

- Ability to customize, as with bare metal Linux, larger disks than typical preconfigured systems, and room for other expansion.

- That intangible "it's mine" factor, even though I didn't build it from the ground up

Less good things

- Requires more expensive ECC RAM

- Less than clear bleeding-edge CPU performance, though with comparable benchmark results to current workstation products.

What didn't go so smoothly?

As noted, I was generally pleased or surprised with the way the configuration and migration process went. (Maybe I'm an inherent cynic or pessimist, or have just learned from experience.) Naturally, though, there were some exceptions, but most were more related to aspects of particular software than to the server platform.

Ubuntu's Shotwell photo organizer didn't react favorably when I moved the 23K-image photo library onto the new system and in a different location on the new file system. It computed for hours as it updated its database with particular, er, focus on images uploaded in raw formats. For these, it cycled around the directories creating multiple sidecar copies of .jpg versions of the images, using its own raw developer rather than that of the camera. (It was easy to see the difference, when former straight lines morphed into uncorrected pincushion distortion.) Noting that this didn't stop even once single sidecars had been produced, I took the sledgehammer step of stopping the program, mass-deleting the generated files with

_shotwellin their names, and reimporting the result. This restored stable access to the photo collection, though then without the crops, exposure adjustments, and database-resident tags that I'd placed on some of the images.In revisiting the Shotwell reinstallation in hopes of recovering my image tagging, I revived the old desktop, made a copy of the photo hierarchy in a new location, and am giving Shotwell another chance to run without interference. As I write, one of the CPU cores is at 100%, with a displayed total image count well below what should be present. After a few minutes, the library image count went down further, to almost none, CPU utilization continued but at a lower value, and the status window indicates that it's auto-importing. Will see how and if it stabilizes. Result wasn't good - now transferring underlying photo files from server across wireless LAN at (very roughly) 0.5GB/minute to reimport into fresh database, taking a total of about 10.9 hours. Should have carried one of my USB 3.0 disks instead!

I later figured out how to use SQLite Browser to merge in selected metadata from the earlier installation into Shotwell's PhotoTable. After various false starts trying to attach a second database, I exported the old installation's PhotoTable to CSV and imported it into a new table within a single database. Patterning after code in a helpful StackOverflow thread, I was able to use SQL of the form

REPLACE INTO PhotoTable ( named-columns ) SELECT named-columns FROM old-PhotoTable src INNER JOIN PhotoTable dest ON src.md5 == dest.md5

which matched up entries based on their hashes and replaced selected columns in those entries. I enumerated all the column entries in the select clause with "src.name" or "dest.name", to explicitly state which I wanted to retain from the existing PhotoTable and which I wanted to copy from the older data. This might have been more typing than I needed, but the result seems to have worked to restore my image transformations and comments, though not tags which are encoded by listing tagged image identifiers in the TagTable. A couple of weeks later, I spent a couple of days in the instructive effort of writing a Python/SQLAlchemy program that went through database tables, parsed and mapped their contents, and recovered those tags as well.After trying and failing to install Windows 10 from a purchased USB into a VM through various paths, I succeeded once I downloaded the ISO version that Microsoft provides. Some adjustment of VirtualBox parameters was necessary (as to enable EFI), and I'm still tweaking with display modes parameters for best aesthetics and readability, but I've gotten it to the point of satisfying the "can run Quicken" use case. I stumbled after finding that VirtualBox doesn't boot VM's from USB (at least by default), and in creating an ISO image from that USB that wouldn't boot, claiming that there wasn't anything bootable. That might have changed had I known at that point that the EFI option is needed, but it's moot now for me as I now have an operational VM in place. Once the VM was successfully running, I had a few further stumbles trying to find the Guest Additions virtual CD (a prerequisite for resolution greater than 1024x768), as it didn't appear on the menus within the guest where it was supposed to show up, but I found Oracle's direct download location and the result worked as desired. At least as seen within a VM, Windows' handling of high-DPI displays seems smoother than than within the current version of Gnome. So far, my Windows needs are being served quite satisfactorily within a 50GB VM (which can be enlarged later if needed), and it's quite agreeable to see Windows' update processing taking place within a window while still being able to use the underlying Linux system without interference! Further, I may never need to open a browser within the VM except for occasional test purposes, which should serve to limit its attack surface significantly.

One still-loose end concerns audio when played from within a VM. A Ubuntu VM seems (with limited testing) to work with default settings, but Windows (ah, why am I not surprised) didn't start out gracefully. Sound was crackly and distorted. Following Web wisdom about observed issues with VirtualBox guest audio, I downloaded and installed the (unsigned, hopefully free of malicious code...) Realtek audio driver and switched from the default PulseAudio setting to ALSA, also lifting the VM's processor cap in VirtualBox settings. This made the sound better than it was, but it still isn't very good. The audio configuration also seems fragile; I idly clicked the Dolby Access app in the preinstalled applications, after which my sound was disabled. I've now reinstalled the Realtek driver and created a "just in case" system restore point, and am hearing the vital Windows system sounds (even if in somewhat crackly form) once again.

Lesson learned in preparing a screencast: the Kazam recorder application doesn't play well with a Gnome-scaled display; needed to switch back down to 100% temporarily. Further, selection of a window doesn't track if the window is resized to become narrower; to show that, it works better to select a screen area enclosing the displayed window.

What did it cost?

Prices before tax and shipping, where applicable.

- Server platform: $299. Almost absurdly cheap. IIRC, I spent an additional $30 or so for a discounted (Doorbusted maintenance!?) 3-year parts warranty and $20 on an expansion cable set if I want to add more drives.

- 4K monitor: $450. Can't see 4K without one, and it looks very nice.

- 16GB ECC RAM: $207. Expensive, and I'd probably have been OK to upgrade the base system's 8GB by 8GB to a total of 16GB rather than the 24GB I now have.

- Two 4TB NAS-grade disks: $238. Could have gone without RAID1 or saved with less capacity, but I now have high-confidence storage with plenty of free space.

- Wireless NIC: $30. Servers don't inherently have wireless, and I definitely wanted 802.11ac. The USB widget I bought didn't end up working without Linux drivers that weren't instantly available, so I "borrowed" the PCI Express adapter I'd been using in my former desktop and that Just Worked.

- Windows 10 Home: $120. I hadn't paid the "Windows tax" on a system which shipped without any OS, so this was my "opportunity" to do so explicitly. It's neatly encapsulated inside a VM, though.

- 1 TB SSD: $150.

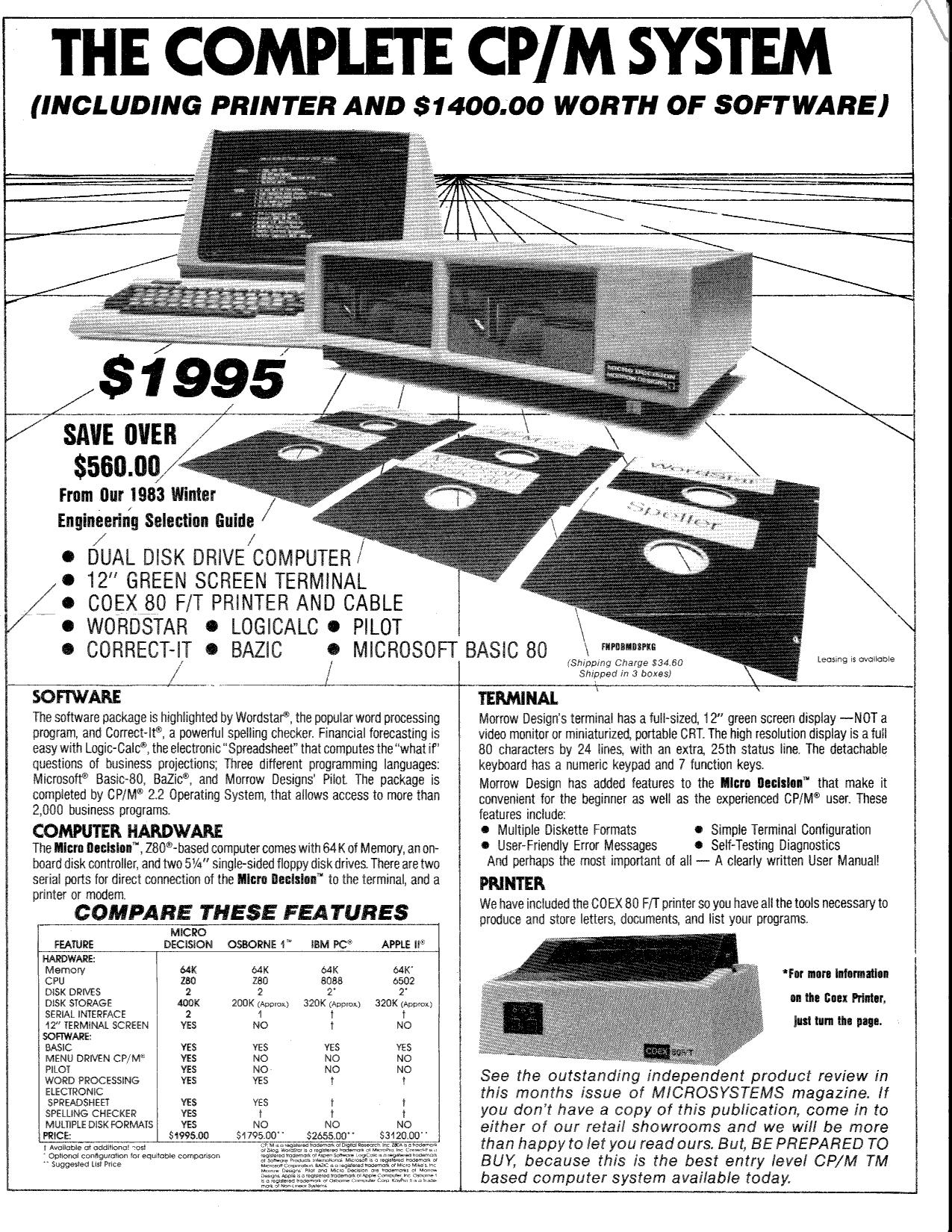

this

1983 CP/M system as offered in a Priority One Electronics flyer;

in fairness, though, my new machine doesn't have the same bundled software

or dot matrix printer. What a difference 35 years makes!

this

1983 CP/M system as offered in a Priority One Electronics flyer;

in fairness, though, my new machine doesn't have the same bundled software

or dot matrix printer. What a difference 35 years makes!